fs.default.name hdfs://mr5:9000 The name of the defaultfile system.Either the literal string "local" or a host:port forNDFS. true

io.native.lib.available true

hadoop.tmp.dir /home/hadoop/tmp A base for other temporarydirectories.

(4)、hdfs-site.xml :

dfs.namenode.name.dir file:/home/hadoop/dfsdata/name Determines where on thelocal filesystem the DFS name node should store the name table.If this is acomma-delimited list of directories,then name table is replicated in all of thedirectories,for redundancy. true

dfs.datanode.data.dir file:/home/hadoop/dfsdata/data Determines where on thelocal filesystem an DFS data node should store its blocks.If this is acomma-delimited list of directories,then data will be stored in all nameddirectories,typically on different devices.Directories that do not exist areignored. true

Hive 1.1.0

Subversion git://localhost.localdomain/Users/noland/workspaces/hive-apache/hive -r 3b87e226d9f2ff5d69385ed20704302cffefab21

Compiled by noland on Wed Feb 18 16:06:08 PST 2015

From source with checksum bca57a923a7578b7e5e9350ffb165cca1234

Logging initialized using configuration in jar:file:/usr/lib/hive/apache-hive-1.1.0-bin/lib/hive-common-1.1.0.jar!/hive-log4j.properties SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/usr/lib/cluster001/SERVICE-HADOOP-97a10ac9a6f044fd8e844b9f6afce095/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/usr/lib/hive/apache-hive-1.1.0-bin/lib/hive-jdbc-1.1.0-standalone.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] hive> quit;

一、Impala简介

Cloudera Impala对你存储在Apache Hadoop在HDFS,HBase的数据提供直接查询互动的SQL。除了像Hive使用相同的统一存储平台,Impala也使用相同的元数据,SQL语法(Hive SQL),ODBC驱动程序和用户界面(Hue Beeswax)。Impala还提供了一个熟悉的面向批量或实时查询和统一平台。

二、Impala安装

1.安装要求

(1)软件要求

Red Hat Enterprise Linux (RHEL)/CentOS 6.2 (64-bit)

CDH 4.1.0 or later

Hive

MySQL

(2)硬件要求

在Join查询过程中需要将数据集加载内存中进行计算,因此对安装Impalad的内存要求较高。

2、安装准备

(1)操作系统版本查看

>more/etc/issue

CentOSrelease 6.2 (Final)

Kernel \ron an \m

(2)机器准备

10.28.169.112mr5

10.28.169.113mr6

10.28.169.114mr7

10.28.169.115mr8

各机器安装角色

mr5:NameNode、ResourceManager、SecondaryNameNode、Hive、impala-state-store

mr6、mr7、mr8:DataNode、NodeManager、impalad

(3)用户准备

在各个机器上新建用户hadoop,并打通ssh

(4)软件准备

到cloudera官网下载:

Hadoop:

hadoop-2.0.0-cdh4.1.2.tar.gz

hive:

hive-0.9.0-cdh4.1.2.tar.gz

impala:

impala-0.3-1.p0.366.el6.x86_64.rpm

impala-debuginfo-0.3-1.p0.366.el6.x86_64.rpm

impala-server-0.3-1.p0.366.el6.x86_64.rpm

impala-shell-0.3-1.p0.366.el6.x86_64.rpm

impala依赖包下载:

4、hadoop-2.0.0-cdh4.1.2安装

(1)安装包准备

hadoop用户登录到mr5机器,将hadoop-2.0.0-cdh4.1.2.tar.gz上传到/home/hadoop/目录下并解压:

tar zxvf hadoop-2.0.0-cdh4.1.2.tar.gz

(2)配置环境变量

修改mr5机器hadoop用户主目录/home/hadoop/下的.bash_profile环境变量:

exportJAVA_HOME=/usr/jdk1.6.0_30

exportJAVA_BIN=${JAVA_HOME}/bin

exportCLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export JAVA_OPTS="-Djava.library.path=/usr/local/lib-server -Xms1024m -Xmx2048m -XX:MaxPermSize=256m -Djava.awt.headless=true-Dsun.net.client.defaultReadTimeout=600

00-Djmagick.systemclassloader=no -Dnetworkaddress.cache.ttl=300-Dsun.net.inetaddr.ttl=300"

exportHADOOP_HOME=/home/hadoop/hadoop-2.0.0-cdh4.1.2

exportHADOOP_PREFIX=$HADOOP_HOME

exportHADOOP_MAPRED_HOME=${HADOOP_HOME}

exportHADOOP_COMMON_HOME=${HADOOP_HOME}

exportHADOOP_HDFS_HOME=${HADOOP_HOME}

exportHADOOP_YARN_HOME=${HADOOP_HOME}

export PATH=$PATH:${JAVA_HOME}/bin:${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin

exportJAVA_HOME JAVA_BIN PATH CLASSPATH JAVA_OPTS

exportHADOOP_LIB=${HADOOP_HOME}/lib

exportHADOOP_CONF_DIR=${HADOOP_HOME}/etc/hadoop

(3)修改配置文件

在机器mr5上hadoop用户登录修改hadoop的配置文件(配置文件目录:hadoop-2.0.0-cdh4.1.2/etc/hadoop)

(1)、slaves :

添加以下节点

mr6

mr7

mr8

(2)、hadoop-env.sh :

增加以下环境变量

exportJAVA_HOME=/usr/jdk1.6.0_30

exportHADOOP_HOME=/home/hadoop/hadoop-2.0.0-cdh4.1.2

exportHADOOP_PREFIX=${HADOOP_HOME}

export HADOOP_MAPRED_HOME=${HADOOP_HOME}

exportHADOOP_COMMON_HOME=${HADOOP_HOME}

exportHADOOP_HDFS_HOME=${HADOOP_HOME}

exportHADOOP_YARN_HOME=${HADOOP_HOME}

exportPATH=$PATH:${JAVA_HOME}/bin:${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin

exportJAVA_HOME JAVA_BIN PATH CLASSPATH JAVA_OPTS

exportHADOOP_LIB=${HADOOP_HOME}/lib

exportHADOOP_CONF_DIR=${HADOOP_HOME}/etc/hadoop

(3)、core-site.xml :

fs.default.name

hdfs://mr5:9000

The name of the defaultfile system.Either the literal string "local" or a host:port forNDFS.

true

io.native.lib.available

true

hadoop.tmp.dir

/home/hadoop/tmp

A base for other temporarydirectories.

(4)、hdfs-site.xml :

dfs.namenode.name.dir

file:/home/hadoop/dfsdata/name

Determines where on thelocal filesystem the DFS name node should store the name table.If this is acomma-delimited list of directories,then name table is replicated in all of thedirectories,for redundancy.

true

dfs.datanode.data.dir

file:/home/hadoop/dfsdata/data

Determines where on thelocal filesystem an DFS data node should store its blocks.If this is acomma-delimited list of directories,then data will be stored in all nameddirectories,typically on different devices.Directories that do not exist areignored.

true

dfs.replication

3

dfs.permission

false

(5)、mapred-site.xml:

mapreduce.framework.name

yarn

mapreduce.job.tracker

hdfs://mr5:9001

true

mapreduce.task.io.sort.mb

512

mapreduce.task.io.sort.factor

100

mapreduce.reduce.shuffle.parallelcopies

50

mapreduce.cluster.temp.dir

file:/home/hadoop/mapreddata/system

true

mapreduce.cluster.local.dir

file:/home/hadoop/mapreddata/local

true

(6)、yarn-env.sh :

增加以下环境变量

exportJAVA_HOME=/usr/jdk1.6.0_30

exportHADOOP_HOME=/home/hadoop/hadoop-2.0.0-cdh4.1.2

exportHADOOP_PREFIX=${HADOOP_HOME}

exportHADOOP_MAPRED_HOME=${HADOOP_HOME}

exportHADOOP_COMMON_HOME=${HADOOP_HOME}

exportHADOOP_HDFS_HOME=${HADOOP_HOME}

exportHADOOP_YARN_HOME=${HADOOP_HOME}

exportPATH=$PATH:${JAVA_HOME}/bin:${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin

exportJAVA_HOME JAVA_BIN PATH CLASSPATH JAVA_OPTS

exportHADOOP_LIB=${HADOOP_HOME}/lib

exportHADOOP_CONF_DIR=${HADOOP_HOME}/etc/hadoop

(7)、yarn-site.xml:

yarn.resourcemanager.address

mr5:8080

yarn.resourcemanager.scheduler.address

mr5:8081

yarn.resourcemanager.resource-tracker.address

mr5:8082

yarn.nodemanager.aux-services

mapreduce.shuffle

yarn.nodemanager.aux-services.mapreduce.shuffle.class

org.apache.hadoop.mapred.ShuffleHandler

yarn.nodemanager.local-dirs

file:/home/hadoop/nmdata/local

thelocal directories used by the nodemanager

yarn.nodemanager.log-dirs

file:/home/hadoop/nmdata/log

thedirectories used by Nodemanagers as log directories

(4)拷贝到其他节点

(1)、在mr5上配置完第2步和第3步后,压缩hadoop-2.0.0-cdh4.1.2

rm hadoop-2.0.0-cdh4.1.2.tar.gz

tar zcvf hadoop-2.0.0-cdh4.1.2.tar.gz hadoop-2.0.0-cdh4.1.2

然后将hadoop-2.0.0-cdh4.1.2.tar.gz远程拷贝到mr6、mr7、mr8机器上

scp/home/hadoop/hadoop-2.0.0-cdh4.1.2.tar.gz hadoop@mr6:/home/hadoop/

scp/home/hadoop/hadoop-2.0.0-cdh4.1.2.tar.gz hadoop@mr7:/home/hadoop/

scp/home/hadoop/hadoop-2.0.0-cdh4.1.2.tar.gz hadoop@mr8:/home/hadoop/

(2)、将mr5机器上hadoop用户的配置环境的文件.bash_profile远程拷贝到mr6、mr7、mr8机器上

scp/home/hadoop/.bash_profile hadoop@mr6:/home/hadoop/

scp/home/hadoop/.bash_profile hadoop@mr7:/home/hadoop/

scp/home/hadoop/.bash_profile hadoop@mr8:/home/hadoop/

拷贝完成后,在mr5、mr6、mr7、mr8机器的/home/hadoop/目录下执行

source.bash_profile

使得环境变量生效

(5)启动hdfs和yarn

以上步骤都执行完成后,用hadoop用户登录到mr5机器依次执行:

hdfsnamenode -format

start-dfs.sh

start-yarn.sh

通过jps命令查看:

mr5成功启动了NameNode、ResourceManager、SecondaryNameNode进程;

mr6、mr7、mr8成功启动了DataNode、NodeManager进程。

(6)验证成功状态

通过以下方式查看节点的健康状态和作业的执行情况:

浏览器访问(本地需要配置hosts)

5、hive-0.9.0-cdh4.1.2安装

(1)安装包准备

使用hadoop用户上传hive-0.9.0-cdh4.1.2到mr5机器的/home/hadoop/目录下并解压:

tar zxvf hive-0.9.0-cdh4.1.2

(2)配置环境变量

在.bash_profile添加环境变量:

exportHIVE_HOME=/home/hadoop/hive-0.9.0-cdh4.1.2

exportPATH=$PATH:${JAVA_HOME}/bin:${HADOOP_HOME}/bin:${HADOOP_HOME}/sbin:${HIVE_HOME}/bin

exportHIVE_CONF_DIR=$HIVE_HOME/conf

exportHIVE_LIB=$HIVE_HOME/lib

添加完后执行以下命令使得环境变量生效:

..bash_profile

(3)修改配置文件

修改hive配置文件(配置文件目录:hive-0.9.0-cdh4.1.2/conf/)

在hive-0.9.0-cdh4.1.2/conf/目录下新建hive-site.xml文件,并添加以下配置信息:

hive.metastore.local

true

javax.jdo.option.ConnectionURL

jdbc:mysql://10.28.169.61:3306/hive_impala?createDatabaseIfNotExist=true

javax.jdo.option.ConnectionDriverName

com.mysql.jdbc.Driver

javax.jdo.option.ConnectionUserName

hadoop

javax.jdo.option.ConnectionPassword

123456

hive.security.authorization.enabled

false

hive.security.authorization.createtable.owner.grants

ALL

hive.querylog.location

${user.home}/hive-logs/querylog

(4)验证成功状态

完成以上步骤之后,验证hive安装是否成功

在mr5命令行执行hive,并输入”show tables;”,出现以下提示,说明hive安装成功:

>hive

hive>show tables;

OK

Time taken:18.952 seconds

hive>

6、impala安装

说明:

(1)、以下1、2、3、4步是在root用户分别在mr5、mr6、mr7、mr8下执行

(2)、以下第5步是在hadoop用户下执行

(1)安装依赖包:

安装mysql-connector-java:

yum install mysql-connector-java

安装bigtop

rpm -ivh bigtop-utils-0.4+300-1.cdh4.0.1.p0.1.el6.noarch.rpm

安装libevent

rpm -ivhlibevent-1.4.13-4.el6.x86_64.rpm

如存在其他需要安装的依赖包,可以到以下链接:

http://mirror.bit.edu.cn/centos/6.3/os/x86_64/Packages/进行下载。

(2)安装impala的rpm,分别执行

rpm -ivh impala-0.3-1.p0.366.el6.x86_64.rpm

rpm -ivh impala-server-0.3-1.p0.366.el6.x86_64.rpm

rpm -ivh impala-debuginfo-0.3-1.p0.366.el6.x86_64.rpm

rpm -ivh impala-shell-0.3-1.p0.366.el6.x86_64.rpm

(3)找到impala的安装目录

完成第1步和第2步后,通过以下命令:

find / -name impala

输出:

/usr/lib/debug/usr/lib/impala

/usr/lib/impala

/var/run/impala

/var/log/impala

/var/lib/alternatives/impala

/etc/default/impala

/etc/alternatives/impala

找到impala的安装目录:/usr/lib/impala

(4)配置Impala

在Impala安装目录/usr/lib/impala下创建conf,将hadoop中的conf文件夹下的core-site.xml、hdfs-site.xml、hive中的conf文件夹下的hive-site.xml复制到其中。

在core-site.xml文件中添加如下内容:

dfs.client.read.shortcircuit

true

dfs.client.read.shortcircuit.skip.checksum

false

在hadoop和impala的hdfs-site.xml文件中添加如下内容并重启hadoop和impala:

dfs.datanode.data.dir.perm

755

dfs.block.local-path-access.user

hadoop

dfs.datanode.hdfs-blocks-metadata.enabled

true

(5)启动服务

(1)、在mr5启动Impala state store,命令如下:

>GLOG_v=1 nohup statestored-state_store_port=24000 &

如果statestore正常启动,可以在/tmp/statestored.INFO查看。如果出现异常,可以查看/tmp/statestored.ERROR定位错误信息。

(2)、在mr6、mr7、mr8启动Impalad,命令如下:

mr6:

>GLOG_v=1 nohup impalad -state_store_host=mr5-nn=mr5 -nn_port=9000 -hostname=mr6 -ipaddress=10.28.169.113 &

mr7:

>GLOG_v=1 nohup impalad -state_store_host=mr5-nn=mr5 -nn_port=9000 -hostname=mr7 -ipaddress=10.28.169.114 &

mr8:

>GLOG_v=1 nohup impalad -state_store_host=mr5-nn=mr5 -nn_port=9000 -hostname=mr8 -ipaddress=10.28.169.115 &

如果impalad正常启动,可以在/tmp/impalad.INFO查看。如果出现异常,可以查看/tmp/ impalad.ERROR定位错误信息。

(6)使用shell

使用impala-shell启动Impala Shell,分别连接各Impalad主机(mr6、mr7、mr8),刷新元数据,之后就可以执行shell命令。相关的命令如下(可以在任意节点执行):

>impala-shell

[Not connected]> connect mr6:21000

[mr6:21000] >refresh

[mr6:21000]>connectmr7:21000

[mr7:21000]>refresh

[mr7:21000]>connectmr8:21000

[mr8:21000]>refresh

(7)验证成功状态

使用impala-shell启动Impala Shell,分别连接各Impalad主机,刷新元数据,之后就可以执行shell命令。相关的命令如下(可以在任意节点执行):

>impala-shell

[Not connected]> connect mr6:21000

[mr6:21000]>refresh

[mr6:21000] >show databases

default

[mr6:21000] >

出现以上提示信息,说明安装成功。

前言

如果你认为impala的安装真的只是安装impala那么就大错特错了。因为impala依赖于hive存储元数据,所以你需要安装hive,因为hive依赖hadoop,所以你需要安装hadoop,因为hive的元数据存在关系数据库中比如mysql,所以你需要安装mysql。又因为impala需要依赖sentry的jar包,所以你还需要下载sentry。最令人发指的是如果你不想用cloudera manager安装impala,就需要知己确保以上各个组件的版本兼容,稍有差错就会各种问题。经过各种坑,献上我的安装步骤。由于我的环境hadoop已经装好了,所以本文不会涉及hadoop安装,大家可以自己去找。另外本文的安装方式是rpm包安装的,如果看官想知道如何自己编译源码,安装部署,可以看impala2.12.0的编译与安装

MySQL-client-5.6.41-1.el6.x86_64.rpm

MySQL-server-5.6.41-1.el6.x86_64.rpm

rm -rf /etc/my.cnf.*

rm -rf /etc/my.cnf.*

rpm -ivh MySQL-server-5.6.41-1.el6.x86_64.rpm

rpm -ivh MySQL-client-5.6.41-1.el6.x86_64.rpm

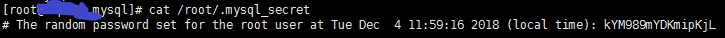

查看mysql初始登录密码

service mysql start

mysql –uroot –p初始密码

mysql >set PASSWORD=PASSWORD(‘密码’);

mysql> GRANT ALL PRIVILEGES ON . TO ‘root’@’%’ IDENTIFIED BY ‘root’ WITH GRANT OPTION;

mysql> GRANT ALL PRIVILEGES ON . TO ‘root’@‘hadoop02’ IDENTIFIED BY ‘root’ WITH GRANT OPTION;

mysql> GRANT ALL PRIVILEGES ON . TO ‘root’@’%’ IDENTIFIED BY ‘root’ WITH GRANT OPTION;

mysql> FLUSH PRIVILEGES;

apache-hive-1.1.0-bin.tar

tar -zxvf apache-hive-1.1.0-bin.tar.gz

编辑/etc/profile,添加

执行source /etc/profile

执行hive –version

输出如下:

在hive 的config目录下创建hive-site.xml,添加如下内容

cp mysql-connector-java-5.1.47-bin.jar lib/

mysql> create database hive;

Query OK, 1 row affected (0.00 sec)

进入hive的bin目录下

schematool -dbType mysql –initSchema

如果成功输出如下:

[root@fff apache-hive-1.1.0-bin]# hive

Logging initialized using configuration in jar:file:/usr/lib/hive/apache-hive-1.1.0-bin/lib/hive-common-1.1.0.jar!/hive-log4j.properties

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/usr/lib/cluster001/SERVICE-HADOOP-97a10ac9a6f044fd8e844b9f6afce095/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/usr/lib/hive/apache-hive-1.1.0-bin/lib/hive-jdbc-1.1.0-standalone.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

hive> quit;

sentry-1.5.1+cdh5.14.2+444-1.cdh5.14.2.p0.11.el6.noarch.rpm

rpm -ivh --nodeps sentry-1.5.1+cdh5.14.2+444-1.cdh5.14.2.p0.11.el6.noarch.rpm

bigtop-utils-0.7.0+cdh5.3.3+0-1.cdh5.3.3.p0.8.el6.noarch.rpm

impala-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

impala-catalog-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

impala-debuginfo-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

impala-server-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

impala-shell-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

impala-state-store-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

impala-udf-devel-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

注意: 如果是hadoop集群的话,impala-server impala-shell 需要安装在每个datanode所在节点上,因为impala是MPP结构,需要通过分布在各个节点上的server去并行处理请求的。

rpm -ivh --nodeps impala-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

rpm -ivh --nodeps impala-catalog-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

rpm -ivh --nodeps impala-debuginfo-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

rpm -ivh --nodeps impala-server-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

rpm -ivh --nodeps impala-shell-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

rpm -ivh --nodeps impala-state-store-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

rpm -ivh --nodeps impala-udf-devel-2.12.0+cdh5.15.1+0-1.cdh5.15.1.p0.4.el7.x86_64.rpm

使用rpm安装之后在impala/lib下面会发现很多无效软连接,全部删除

cd /usr/lib/impala/lib

sudo rm -rf avro*.jar

sudo rm -rf hadoop-.jar

sudo rm -rf hive-.jar

sudo rm -rf hbase-.jar

sudo rm -rf parquet-hadoop-bundle.jar

sudo rm -rf sentry-.jar

sudo rm -rf zookeeper.jar

sudo rm -rf libhadoop.so

sudo rm -rf libhadoop.so.1.0.0

sudo rm -rf libhdfs.so

sudo rm -rf libhdfs.so.0.0.0

删除之后:重新创建软连接:脚本如下:

注意事项:脚本中jar包版本是自己环境中的jar包版本,不要直接运用,必须修改,否则依然找不到软连接

rpm -ivh bigtop-utils-0.7.0+cdh5.3.3+0-1.cdh5.3.3.p0.8.el6.noarch.rpm

cd /etc/default/

vim bigtop-utils

添加:export JAVA_HOME=/soft/java/jdk1.8.0_65 //对应自己的java_home

Source /etc/default/bigtop-utils

cp $HADOOP_HOME/etc/hadoop/hdfs-site.xml /etc/impala/conf

修改hdfs-site.xml

cp $HADOOP_HOME/etc/hadoop/core-site.xml /etc/impala/conf

修改core-site配置

cp $HIVE_HOME/conf/hive-site.xml /etc/impala/conf

这步很重要,被坑惨过

cp /usr/lib/hive/apache-hive-1.1.0-bin/lib/mysql-connector-java-5.1.47-bin.jar /usr/share/java/mysql-connector-java.jar

vi /etc/default/impala

修改后如下:

IMPALA_CATALOG_SERVICE_HOST=127.0.0.1 IMPALA_STATE_STORE_HOST=127.0.0.1 IMPALA_STATE_STORE_PORT=24000 IMPALA_BACKEND_PORT=22000 IMPALA_LOG_DIR=/var/log/impala IMPALA_CATALOG_ARGS=" -log_dir=${IMPALA_LOG_DIR} " IMPALA_STATE_STORE_ARGS=" -log_dir=${IMPALA_LOG_DIR} -state_store_port=${IMPALA_STATE_STORE_PORT}" IMPALA_SERVER_ARGS=" \ -log_dir=${IMPALA_LOG_DIR} \ -catalog_service_host=${IMPALA_CATALOG_SERVICE_HOST} \ -state_store_port=${IMPALA_STATE_STORE_PORT} \ -use_statestore \ -state_store_host=${IMPALA_STATE_STORE_HOST} \ -be_port=${IMPALA_BACKEND_PORT} \ -kudu_master_hosts=128-39:7051 " ENABLE_CORE_DUMPS=true123456789101112131415161718配置hadoop 的hdfs-size.xml

添加

创建/var/run/hadoop-hdfs 目录

重启hadoop

hive --service metastore &

hive --service hiveserver2 &

service impala-state-store start

service impala-catalog start

service impala-server start

show databases;//验证操作

相关问题推荐

需要

以常用的编程语言php为例:window系统推荐:phpStudy v8.0 (针对Windows系统,免费)针对Windows系统,一键安装,可以自行选择软件版本,你可在本地或者服务器端搭建与配置PHP运行环境。主要功能:1. 全新界面,更美观,操作更清晰2. 安装包内置最新版本Apa...

生产环境下应该如何搭配hadoop生态系统个组件版本查了很多资料,觉得不完善的话请在评论区补充,谢谢!:hadoopCHDhadoop生态系统生产环境版本搭配单机伪分布式Hadoop用于本机练习的话,hadoop版本自定义选择都可以,各个组件搭配也随意,也可以自己本机测试...

catalog节点yum install -y impala-server impala impala-state-store impala-catalogworker 节点yum install -y impala-server impala配置打开 短路读取 和 打开块位置跟踪所谓的短路读取,就是允许impala把一些信息存储在本地磁盘上,可以加快计算的速度。...

Impala是Cloudera公司主导开发的新型查询系统,它提供SQL语义,能查询存储在Hadoop的HDFS和HBase中的PB级大数据。